Shadow AI: the hidden AI risk already inside your business

Shadow AI: the hidden AI risk already inside your business

Most shadow AI use does not begin with a clever AI initiative. It begins with a deadline. Someone needs to summarise customer complaints before a meeting. A technical team needs help troubleshooting a configuration issue. A sales team wants to sharpen an RFP response. An HR manager needs a clearer first draft of a sensitive document.

So they open a public artificial intelligence (AI) tool. They paste in the information they have, and get the help they need.

From the employee’s point of view, they are being productive. From the organisation’s point of view, customer data, employee records, technical architecture, commercial information or regulated data may have just left the business without approval, logging, classification or control.

That is the risk of shadow AI.

What business leaders need to know about shadow AI

Explore: Why Agentic AI Will Matter in 2026.

What is shadow AI?

Shadow AI is the unsanctioned and unmonitored use of generative AI tools by employees, outside the visibility of IT, security, compliance and leadership teams. It may involve AI tools for productivity such as ChatGPT, Gemini, Claude, Copilot or hosted open-source models. The tools themselves are not the problem. The issue is how they are being used, what data is being shared, which models are processing that data, and whether the business can prove what happened afterwards.

You do not need to choose between AI adoption and AI governance. That is a false trade-off.

Because when the sanctioned route is slow, unclear or less useful than the public tool, people will find another route.

Why shadow AI is more urgent than shadow IT

Business leaders understand shadow IT. A team signs up for a SaaS tool without approval. A department starts using software outside procurement. IT later discovers it, assesses the risk and brings it under control.

Shadow AI moves faster. With shadow IT, the main concern is often unauthorised software or infrastructure. With shadow AI, the primary risk is unauthorised data sharing with external AI models. A single prompt can expose regulated, sensitive or commercially valuable data instantly. It may happen through a browser, with no installation and no obvious endpoint footprint.

That changes the governance challenge. A user does not need admin rights or budget approval to create risk. They only need access to a public AI tool and a business problem they want to solve quickly.

The business risks of unmanaged AI use

For business leaders, the concern is not simply that employees are using AI. The concern is that the organisation may have no reliable answer to basic governance questions.

Data privacy and regulatory exposure

Sensitive customer information, employee records, financial data or sector-specific regulated information can be sent to external AI providers without the correct legal, contractual or compliance controls. This creates risk under privacy laws, sector regulations and internal data governance obligations. It also complicates breach response, because the business may not know what was exposed or where it went.

Weak auditability

If AI activity is not logged, the business has no clear audit trail. That matters when leadership, compliance, legal, internal audit or regulators need evidence. Without prompt logs, routing records and policy actions, the organisation may be left with assumptions instead of facts.

Loss of customer and employee trust

Customers expect businesses to handle their information responsibly. Employees expect sensitive HR, health, salary and performance data to be protected. Shadow AI can undermine that trust quickly, especially if the business cannot explain how information was used or controlled.

Inconsistent outputs and decisions

Unmanaged AI also creates quality risk. Different employees may use different tools, prompts and data sources. The business may have no control over approved use cases, no standardised prompt guidance, and no process for reviewing outputs before they influence decisions. That is not a reliable operating model.

Commercial and security exposure

Prompts may include pricing models, product plans, contracts, source code, network architecture or strategic documents. In the wrong context, that information can create competitive, legal or security risk. The issue is not only whether data is “personal”. It is whether the data should ever leave the organisation’s controlled environment.

How we help you govern shadow AI

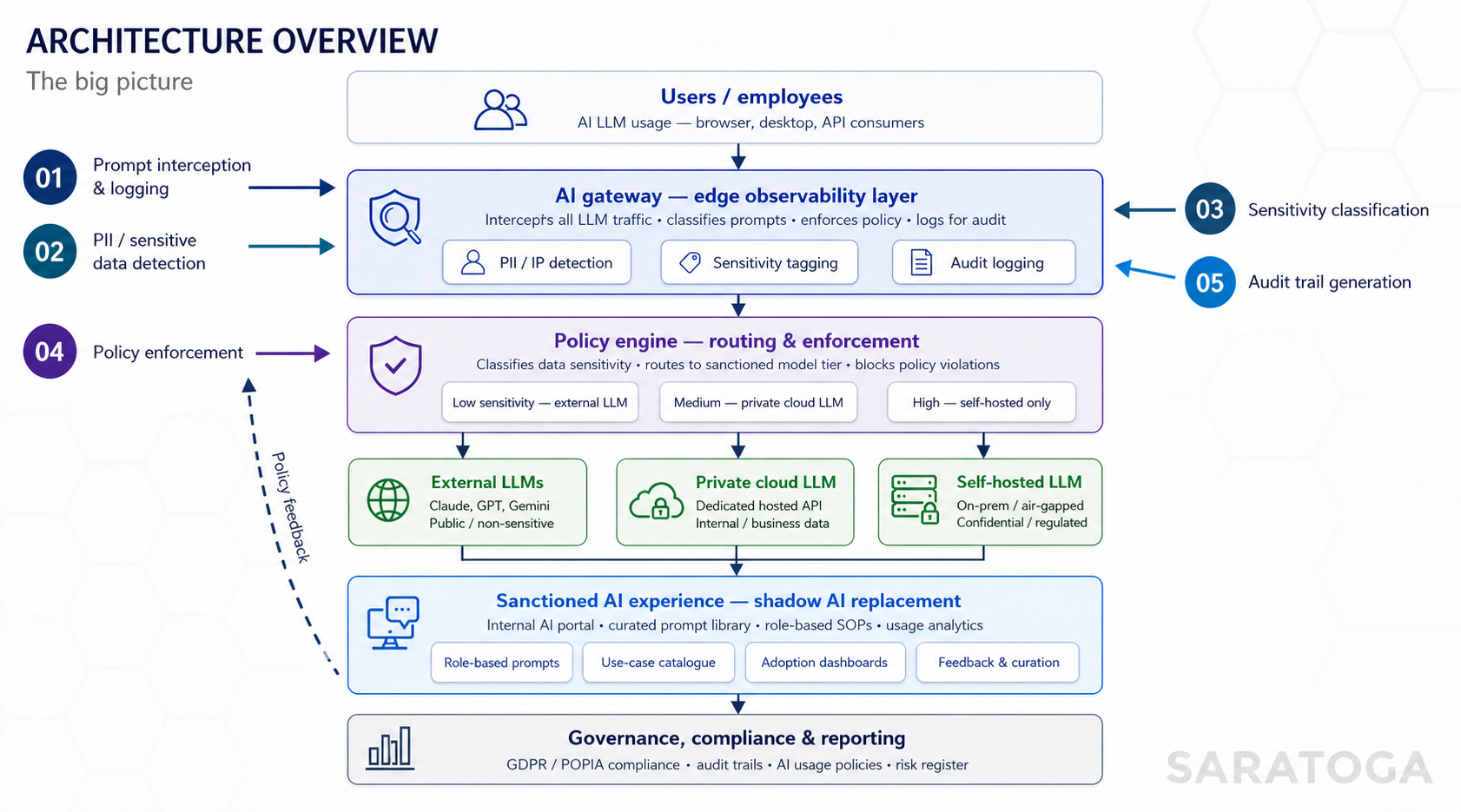

Our compliance-first AI approach is designed to help you govern the AI usage you often cannot see today.

Your users do not need to understand every technical tier. They need a useful AI experience. You need control, visibility and evidence.

Why blocking AI is not enough

A blanket ban may look decisive, but it rarely solves the underlying problem. People use AI because they have work to do. If the approved options are poor, unclear or unavailable, employees will keep looking for ways to get the benefit. That is why AI governance cannot be designed only as a restriction layer.

It keeps the business in control while making the approved route practical enough for real work.

A governed AI experience should:

This is how businesses reduce shadow AI without slowing down responsible adoption.

What Saratoga’s governed AI gives you

Our governed AI experience is built around four business outcomes: visibility, control, adoption and accountability.

Visibility: know where AI is being used

You cannot govern what you cannot see. A governed AI environment gives the business visibility into AI prompts, responses, users, models, destinations and data flows. This does not mean creating unnecessary friction for every interaction. It means making AI activity observable so the organisation can understand usage, risk and adoption.

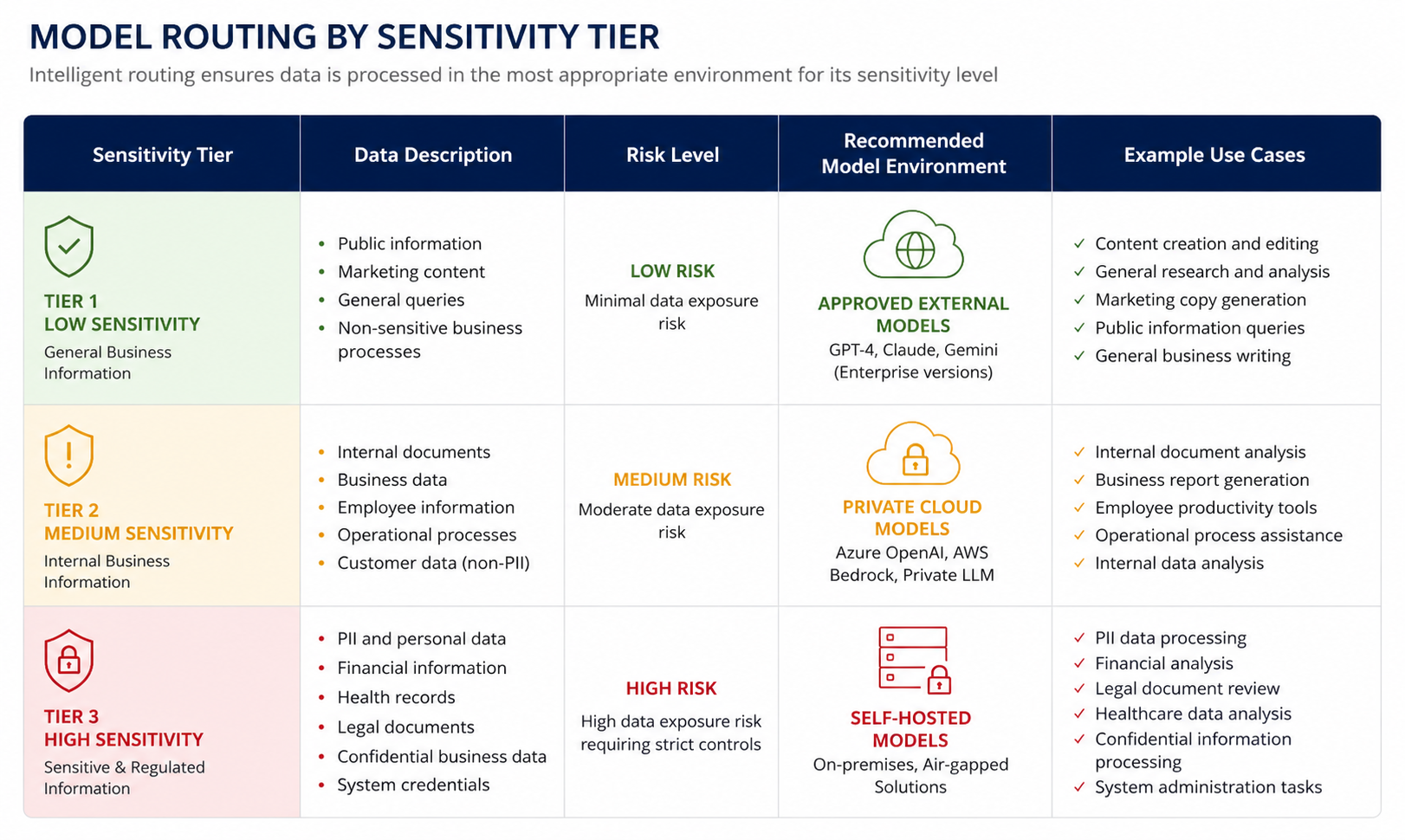

Control: classify, redact, route or block based on risk

Not every AI prompt carries the same risk. A request to improve the wording of a public product description is different from a prompt containing customer account numbers, internal network diagrams or employee medical notes.

A strong governance model classifies prompts by sensitivity and applies the right action. Low-risk prompts may be allowed. Medium-risk prompts may be routed to a private cloud model. High-risk prompts may need to be processed only in a self-hosted or controlled environment. Some prompts may need redaction or blocking. This is more practical than blanket restriction because it reflects how work actually happens.

Adoption: make the approved route the easiest route

Governed AI will only work if people use it. That means the user experience matters. Employees need an intuitive chat interface, curated prompt libraries, role-based workspaces and approved use-case catalogues that help them do their work better.

A network engineer should not have the same AI workspace as an HR business partner. A marketing analyst should see approved prompts for customer insight and campaign analysis. A bid team should have support for RFP responses, proposal drafts and content refinement within controlled boundaries. Good packaging drives adoption. Because our sanctioned AI experience is useful, people have less reason to use risky alternatives.

Accountability: create the evidence trail

Governance needs evidence. Prompt interception, response logging, policy actions, sensitivity classifications and routing decisions should be captured in an audit trail. This gives the business a record of who prompted what, when, which model was used, what data was included, and what was redacted, blocked or approved. That matters for internal investigations, breach response, compliance reporting and leadership oversight.

Explore: CIOs, Here’s How to Build a Culture of AI and Deploy Agentic AI.

What the user sees versus what the business controls

The best governed AI experiences feel simple to the employee. They may see a clean chat interface, a library of approved prompts, role-specific tools and practical suggestions for what they can do with AI. If they paste in a customer account number, they may see a message explaining that sensitive data was detected and redacted before processing.

Behind the scenes, the business controls the important parts: access, routing, classification, redaction, model selection, policy enforcement and audit logging. This is where shadow AI can be replaced by sanctioned AI. Not by forcing people into a slower process, but by giving them a safer and more useful one.

What business leaders should do next

The first step is to assume some level of shadow AI is already happening. That is not a criticism of your people. It is a realistic view of how useful technology spreads when teams are under pressure.

The next step is to assess the risk properly.

Business leaders should ask:

These questions help move AI governance from policy theory into operational reality.

Shadow AI is manageable if you make it visible

Shadow AI is not a reason to slow down AI adoption. It is a reason to govern it properly.

Your people are going to use AI because AI is useful. The leadership responsibility is to make sure that usage is visible, controlled, compliant and aligned to the way your business manages data, risk and accountability.

Saratoga helps businesses design and implement governed AI environments that support adoption without accepting invisible risk. With the right architecture, user experience and compliance model, AI can move out of the shadows and into a framework your teams can trust.

FAQs about shadow AI, AI governance and safe AI adoption

What is shadow AI in business?

Shadow AI is the use of generative AI tools by employees without formal approval, monitoring or governance. It usually happens when teams use public AI tools to complete work tasks faster, without IT, security or compliance teams having visibility into the data being shared.

Why is shadow AI a business risk?

Shadow AI can expose customer data, employee records, financial information, technical architecture, intellectual property or regulated business information to external AI models. It also creates audit and accountability gaps because the business may not know what was shared, who shared it or how the output was used.

How is shadow AI different from shadow IT?

Shadow IT usually involves unauthorised software or infrastructure. Shadow AI creates a more immediate data-sharing risk because sensitive information can be sent to an external AI model in a single prompt. It is also harder to detect because many AI tools are browser-based and require no installation.

Should companies ban public AI tools?

A ban may reduce some exposure, but it is unlikely to stop AI use completely. Employees often turn to AI because it helps them work faster. A better approach is to provide a governed AI experience that is useful, approved and built with the right controls.

What is an AI gateway?

An AI gateway is a control layer between users and AI models. It can intercept prompts, detect sensitive data, classify risk, apply policy, route requests to the right model and log activity for audit. This helps businesses govern AI use without forcing every employee to understand the technical detail.

How can businesses reduce shadow AI risk?

Businesses can reduce shadow AI risk by identifying likely use cases, providing approved AI tools, detecting sensitive data in prompts, enforcing policy automatically, routing requests based on sensitivity and keeping audit trails of AI activity.

What does safe AI adoption look like?

Safe AI adoption gives employees practical access to AI while protecting the organisation’s data, compliance position and accountability. It combines a useful user experience with governance controls behind the scenes, including role-based access, prompt libraries, smart guardrails and audit-ready reporting.

Move AI out of the shadows

Shadow AI is already happening in many organisations. The opportunity now is to make AI usage visible, useful, compliant and controlled without making your people’s work harder.

Talk to Saratoga about designing a governed AI environment that supports adoption, protects sensitive data and gives your business the evidence trail it needs.

Talk to us about governed AI